BRUSSELS (AN) – Little more than a week after Russia's full-scale invasion of Ukraine in February, a new tool for fighting disinformation online found two pro-Russian hashtags surfacing in a surprising range of languages.

The results showed pro-Russian influence networks weren't targeting the West, but were focused on the five leading emerging economies collectively known as BRICS: Brazil, Russia, India, China, and South Africa.

The new multilingual tool, called BEAM, mapped the hashtags #IStandWithPutin and #IStandWithRussia by language as the 193-nation U.N. General Assembly voted on March 2 to condemn Russia's invasion of Ukraine a week earlier. The hashtags appeared to have been nonexistent until just one day before the vote.

Many of the accounts BEAM detected were created only recently and all followed a common pattern: a small burst of activity on the day of the invasion, a flurry of posts on the day of the U.N. vote, then a steep decline in the week that followed.

That analysis "exposed clusters of accounts that had distinctively different national and linguistic identities," said a report last month from the tool's co-developers, the Institute for Strategic Dialogue and CASM Technology, both based in London.

Some of the propaganda-spreading accounts used Hindi, Farsi and Sindhi. A Tamil cluster falsely claimed to be English speakers from South Africa and Nigeria.

“When we dove in and saw it was all of these non-European, Global South identities being used to push the hashtags at a point when so much of the conversation was focused on Russian influence operations targeting Ukraine, Western Europe or America, that was a big eye opener,” Carl Miller, co-founder of CASM, the Center for the Analysis of Social Media, said in an interview.

Pro-invasion propaganda not focusing on the Global North was a surprise, but the findings underlined the importance of BEAM's core capability: its fluency in 17 languages.

What it uncovers is not always made public, the report said, but broad themes it has been used to analyze include "election denial conspiracy theories, public health and vaccine misinformation, and analysis of foreign state actor activity targeting American social media users."

This year, it was used to investigate disinformation in the months ahead of the April presidential election in France, the November midterm elections in the U.S., and the November U.N. climate summit at Sharm el-Sheik, Egypt.

'Blog sentiment analysis'

The machine learning and analytics technology behind BEAM is called Method52, developed by CASM over the past decade to research social media use. In 2014, when it partnered with ISD to develop the tool, public views of disinformation were far less evolved.

"In the beginning, it was an extremely small field," Miller said. "We were considered slightly cranky researchers that were trying to replicate the study of attitudes using social media rather than polling data."

Francesca Arcostanzo, now the digital methods lead within ISD’s Digital Research Unit, was privy to Method52's earliest builds as a PhD researcher at the University of Milan. At the time, synergies between the computational and political sciences were in their infancy.

"Text analysis was exploding, and everyone in political science was excited about the free availability of text data, but it was difficult to collect," she said in an interview. "Back then, we were calling it 'blog sentiment analysis.' The focus was not on disinformation, but on the politicization and polarization around specific issues."

The first modern information threats that drew mass media attention came from al-Qaida and Islamic State extremists' sophisticated use of social media to radicalize and recruit foreign fighters.

Their networks harnessed the power of platforms like Facebook to spread propaganda to vulnerable individuals, and, in the case of Islamic State, even facilitated their travel to join its self-styled “caliphate" carved from lands in Syria and Iraq.

Millions of vanishing messages

In 2016, CASM and ISD researchers conducted live monitoring of the huge amount of Twitter activity claiming the U.S. presidential election was rigged. Soon after Donald Trump was elected, the accounts they were monitoring suddenly vanished into thin air. They had accidentally stumbled onto Russian interference.

"It literally all just turned off. Millions and millions of these messages to nothing," Miller recalled. "I don't think we had any inkling that there might have been some kind of coordinated or hidden attempts to try and skew that conversation. Rather naively, we thought it was organic."

Only six years later, Russian interference in U.S. elections is a well-documented phenomenon.

Russian officials like Yevgeny Prigozhin – a close ally of Russian President Vladimir Putin and founder of the notorious Wagner Group, a Russian mercenary outfit deployed worldwide that has been accused of committing human rights abuses in Africa, Syria, and Ukraine – have bragged openly about Russian efforts to disrupt the 2022 U.S. midterm elections.

But with so much focus on disinformation campaigns targeting the West, less attention is paid to influence campaigns spreading elsewhere around the globe, such as Russian efforts to blame the global food crisis on Western sanctions.

BRICSs and African nations facing severe food shortages are reluctant to pick sides in the war between Ukraine and Russia since they are both major exporters of food and agricultural products. In 2021, the two nations' combined wheat and sunflower oil exports were 30% and 78%, respectively, of the global supply.

Many countries across Africa rely heavily on Russia and Ukraine for their imports of wheat, fertilizer, and vegetable oils, but the war also has disrupted the broader flow of trade and commodities to Africa, pressuring already high food prices.

The data suggests these trends have contributed to making some of the most affected nations more attractive targets for Russian influence campaigns than the U.S. and European Union states which have been leading the sanctions regime.

AI isn't 'magical'

Journalists, lawyers, activists, fact-checkers, regulators and governmental decision-makers have now used BEAM to help more than 350 civil society organizations across 10 countries confront information threats.

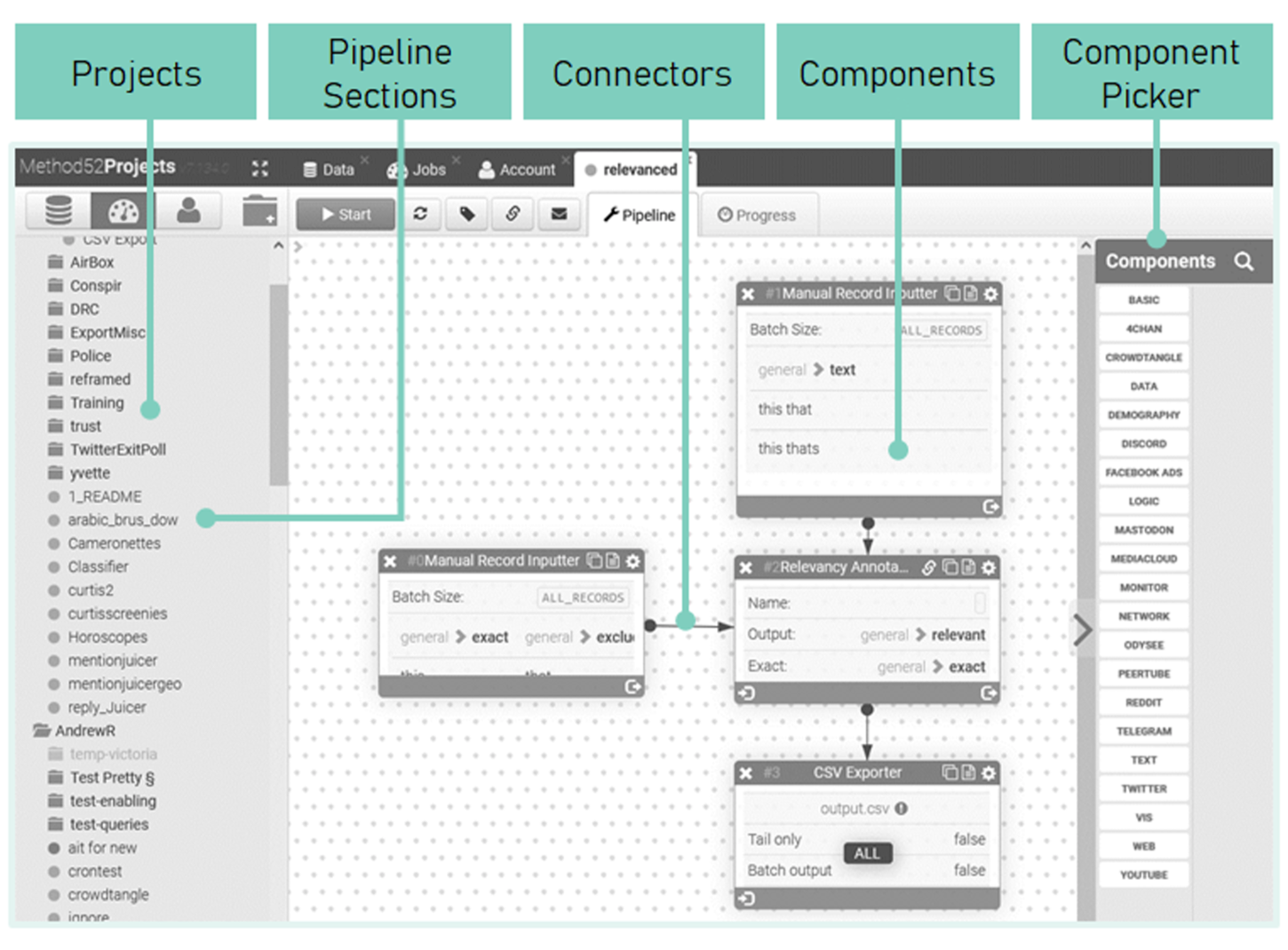

In its current build, BEAM is able to collect data from Reddit, Telegram, Twitter, YouTube, PeerTube, 4Chan, Discord, Mastodon, "mainstream media" news sources and other websites.

The complexity of creating legible datasets from the near-infinite information output of social media sites means BEAM is not a plug-and-play tool, and every deployment requires a bespoke “architecture.”

These so-called BEAM architectures are tailored combinations of artificial intelligence algorithms and language-based network analytics, paired with language processing models trained to recognize specific hate speech lexicons, propaganda talking points, or conspiracy theory tropes.

Every time BEAM is deployed, teams of developers, subject matter experts, data journalists, open-source intelligence practitioners, and BEAM architects program and train new modules to incorporate the necessary languages, social media platforms, and semantic detection algorithms for each project.

When the New Zealand's Royal Commission employed BEAM's technology as part of its response to the 2019 Christchurch shooting, for example, the tool was taught how to analyse the blend of far-right, Islamist and far-left extremism that has developed in the specific context of New Zealand's online ecosystems.

Similar adjustments are made to accommodate the unique requirements of every project. When the European Commission requested a report about online antisemitism during the pandemic in French and German, linguists and hate-speech experts trained new models to identify it in those two languages.

And when the U.S. State Department's Global Engagement Center sponsored a project to investigate the potential for "state-sponsored influence operations on Wikipedia," BEAM needed its backend developers to program new capabilities to analyze the site. The crowdsourced encyclopedia, which Miller described as an "enormously overlooked" online platform for such research, has sophisticated defenses against disinformation, but its universal reach makes it a big target.

A continually evolving tool

Every time there's a new project, BEAM's capabilities snowball. Once a new module is added, it becomes part of BEAM. That makes for an ever-expanding range of inputs and outputs to adapt to research requirements.

“We use lots of ethnographic work combined with more computer-assisted work in order to understand the spaces and how they operate,” said Arcostanzo. “Everything is rooted in the mixed-skill nature of the teams.”

The disinformation space moves fast, so reactivity on the back end of BEAM’s capabilities to adapt to geopolitical shifts is essential, she added.

Russia's invasion of Ukraine, for example, made Russian Facebook-analogue VK and the messaging service Telegram, the app of choice for Russian military bloggers and propagandists, surge in importance overnight.

The co-developers said they've produced more than 45 public research reports that expose promoters of disinformation and hate online. ISD, a nonprofit entity, and CASM, a for-profit company, are selective about who they choose to work with.

BEAM has now been used to generate more than 90 non-public data briefings for legal, security and government partners, 28 public investigations, 150 media exposes of disinformation and fifteen reports of credible threats to the authorities.

“We want to talk more publicly about the whole array of talents which you need to study disinformation to push back against this false idea that some magical proprietary machine learning-based algorithm is going to be the thing that gets us through the challenge of the information space being polluted,” Miller said. “It is almost always a mashing together of very automated ways of handling very large amounts of data, and very human, manual ways of understanding it.”

. @jkingy speaks to @emorwee about the latest trends in #climate #disinformation including:

— Climate Action Against Disinformation (@caadcoalition) December 8, 2022

▪️resurgence in old-fashioned climate denial

▪️confusion around 'natural gas' being green

▪️woke-washing of climate opposition

Read the article here: https://t.co/BrlSKDfXyZ

'The truth alone doesn't work' in the fight against disinformation

So what's been learned through all of this? Miller said he's convinced by the research that simply countering information warfare with more information "is a losing strategy for the West," because reason alone can't win these battles.

"You don't fight these kinds of illicit influence operations with the truth. The truth alone doesn't work," he said. "Disinformation is not principally what we are struggling against. We don't have a brilliant vocabulary to describe the shadowy world of illicit influence. It's a tradecraft that encompasses everything from manipulating search engines to exploiting identity and harnessing outrage."

Setting up the problem as a simple matter of lies on the internet is overly rationalist, Miller said. The amount of agency that the current disinformation discourse ascribes to social media platforms undercuts the power of the ethnic, religious, and national identities that influence campaigns prey upon.

Two of the most consequential impacts of online disinformation, seen in Myanmar and India, bear this out in grim detail.

At the time of the Rohingya genocide, Facebook employed just one Burmese language content moderator, and significant blame has been placed on the company for fanning the flames of Buddhist nationalism.

Internal documents leaked by Facebook whistleblowers earlier this year revealed that Indian users were being inundated with anti-Muslim hate speech, coinciding with an uptick in violence across the country.

But the sentiments fuelling the violence, in both cases, were homegrown.

“Debunking or putting the truth to the lie doesn’t work," said Miller, "when you’re dealing with identity and emotion."

The 'silver lining'

Arcostanzo, too, emphasized the common misconception that disinformation research amounts to fact-checking.

“We rarely try to understand whether any piece of information is, in itself, correct or incorrect; if it is ‘fake news’ or not,” she said. “The focus is more on the big picture of how the spread of content happens and what its relationship is with opinion formation or political belief rather than to understand what’s true, what’s false, and to what extent.”

While efforts to protect information spaces are still in their infancy, Miller's team has come a long way from their days as "slightly cranky researchers." And with this once-niche field of study entering the mainstream, he believes the scales will tip against disinformation as the world invests more resources in protecting against it.

"For me, the silver lining is that we can do something about this problem. Ultimately, we want to get a point where we can actually declare a sort of 'peace' in information spaces, whether through deterring people who want to weaponize it, or building norms and values to protect it," said Miller. "We are making progress."